|

var links = document.querySelectorAll('a') įor (var i = links. How to Extract All Website Links in Python pip3 install requests bs4 colorama import requests from urllib. Your question is much broader (you seem to be asking how to write the entire app). If you want to extract the external URLs only, then this is the code you need to use. 2 Answers Sorted by: 3 The HTML Agility Pack is the best tool I have found for scraping URLs. var urls = document.querySelectorAll('a') Ĭonsole.log(urls.href) Extract External URLs OnlyĮxternal Links are the ones that point outside the current domain. If you are using Chrome or Firefox use the following code for a styled version of the same.ĭemo of extracting links from Wikipedia page using dev console var urls = document.querySelectorAll('a') Ĭonsole.log("%c#"+url+" > %c"+urls.innerHTML +" > %c"+urls.href,"color:red ","color:green ","color:blue ") Īnd if you want to extract just the links without the anchor text, then use the following code.

} Extract URLs + Corresponding Anchor Text – Styled Output (For Chrome & Firefox) var urls = document.querySelectorAll('a') Ĭonsole.log("#"+url+" > "+urls.innerHTML +" > "+urls.href) The following is a cross-browser supported code for extracting URLs along with their anchor text. We do not check the content of the document referenced by this link. Web Page URL Extract All Links Domains Statistics What links do we extract Our service parses the provided website page and discover all anchor 'href' attributes. Copy the code, paste it into the console and hit enter. Type on a web page to extract links from url and press 'Extract'. The JavaScript snippets to extract links are given below. I can’t stress enough how useful that is! To open the console on Chrome, press Cmd + Shift + i on Mac and Ctrl + Shift + i on Windows. You can write JavaScript code and inject it into the current page to do all sorts of fancy things. Once you drag 'Extract data from webpage' action to the PAD editor -> Double click and open it (do not close it) -> While keeping this action open, go to the website -> You will automatically get a 'Live web helper' -> Right click on the first link and select the href link element -> Then Ctrl left click. The browser console is an excellent tool to test and debug things.

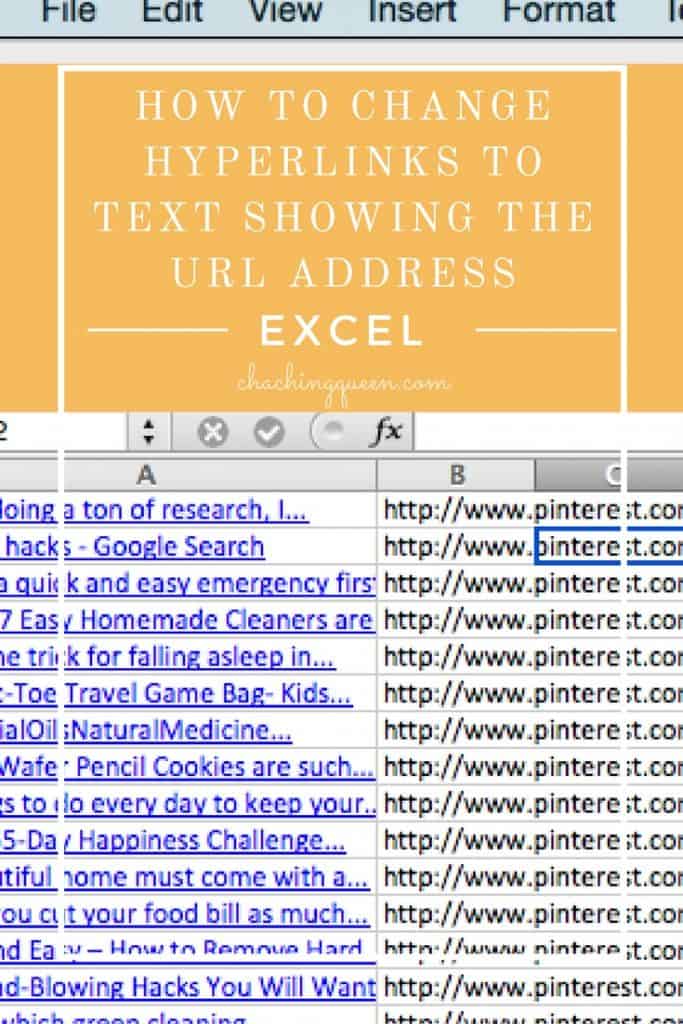

This will open up the console, into which you can type or copy and paste snippets of code. Two other techniques to extract links from page are also shared here for people who don’t want to get their hands dirty with code □. To open the developer console, you can either hit F12 or right-click the page and select Inspect or Inspect Element depending on your browser of choice. If you are impressed with this, do learn some JavaScript as it comes very handy. The code was reasonably simple but there’s now an even easier way to solve the same problem using the new Html.Table () function. This article serves as a short demonstration of how you can use browser developer consoles to scrape data from the web page. Last year I blogged about how to use the Text.BetweenDelimiters () function to extract all the links from the href attributes in the source of a web page. What do you do when you want to export all or specific links from a webpage? Copying them one after another is monotonous and useless especially when you can automate it with a line of JavaScript code. Extracting URLs using Dev Tools console.Web scrapers are used to scrape anything from prices, descriptions, statistics and even code, which we will show you shortly. All links will validate using FILTER_VALIDATE_URL before return and print if it is a valid URL. A web scraper can help you extract data from any site and also pull any specific HTML attributes such as class and title tags. All links will validate using FILTERVALIDATEURL before return and print if it is a valid URL. All the URLs or links are extracted from web page HTML content using DOMDocument class. All the URLs or links are extracted from web page HTML content using DOMDocument class.

Fetched web page content is stored in $urlContent variable. The file_get_contents() function is used to get webpage content from URL. The following PHP code helps to get all the links from a web page URL. The custom extraction feature allows you to scrape any data from the HTML of a web page using CSSPath, XPath and regex. Here we’ll provide short and simple code snippets to extract all URLs from a web page in PHP. Choosing the domain option is beneficial if you want to extract all links from a website and identify any existing link issues. You can easily get all URLs from a web page using PHP. How to Use the Link Extractor Property There are two methods to use the link extractor, namely by domain or by search on a specific page. Extract URLs from the website is used in many cases, generating a sitemap from website URL is one of them.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed